Pay per narration

We shipped a public API for PodRead, the app that takes text, converts it to audio, and places it in your personal podcast feed so you can listen along with everything else.

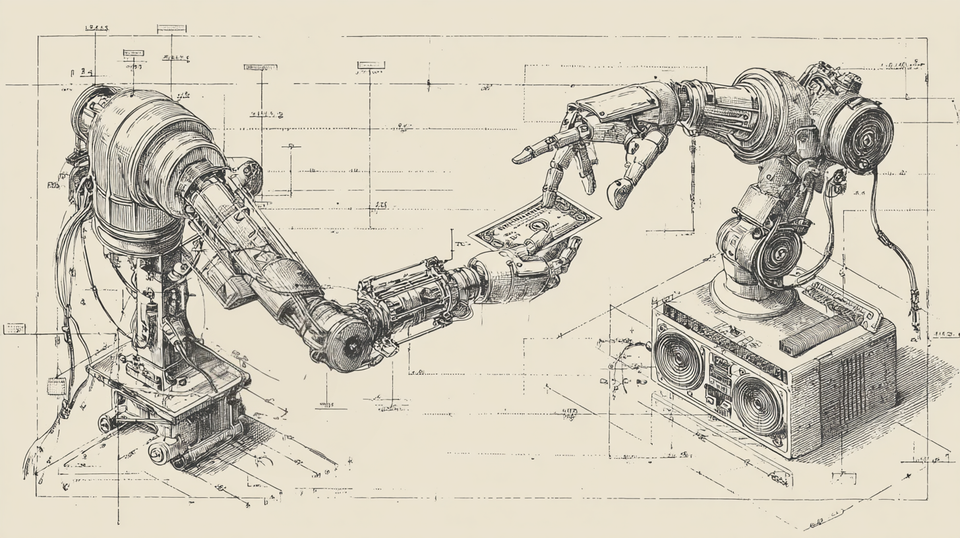

AI agents are starting to have wallets. More and more commerce is going to happen on our behalf. I don't know when agentic commerce takes off, but I'd like to have the doorway open for when it does.

PodRead is the first text-to-speech tool on the Machine Payments Protocol (MPP). You POST to an endpoint with no auth. You get a 402 back with a challenge that says here's the price and here's how we accept payment, in machine-friendly terms. Your client signs an on-chain stablecoin transfer on the Tempo network. You retry with the signed credential. You get an audio file.

This is the equivalent of reaching for an item on display, then having the storekeeper share the price with you, then you handing over a sufficient amount of cash, and then receiving the item. It's just on the internet, between servers.

Tempo and stablecoin keep this in the decidedly unsketchy region of the crypto landscape. MPP is backed by industry leaders like my employer, Stripe.

Here's the whole flow, which I invite you to try out for yourself today.

npx mppx -X POST \

-J '{"text":"hello world","source_type":"text","title":"Baby's first machine to machine payment","author":"me"}' \

https://podread.app/api/v1/mpp/narrations

mppx is the reference client. It handles the 402 → sign → retry for you. You need some pathUSD on Tempo mainnet. 75 cents gets you a Standard voice. A $1.50 gets you Premium.

For my developer friends and users who haven't yet handed their wallets to Claude Code, there's a second door. Sign up, mint a token at /settings/api_tokens, POST to /api/v1/episodes with a Bearer header. Same text, same voices, attached to your personal podcast feed. No blockchain or challenge. For most humans this is the path.

API access has been on the roadmap for a while. It's live now, for humans and agents alike.

Read the full specification in our documentation, or just share them with your agent.

Member discussion